- Generative AI

- A category of artificial intelligence systems — including ChatGPT — that produce new text, code, images, or other content based on user prompts and training data.

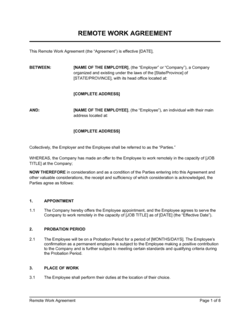

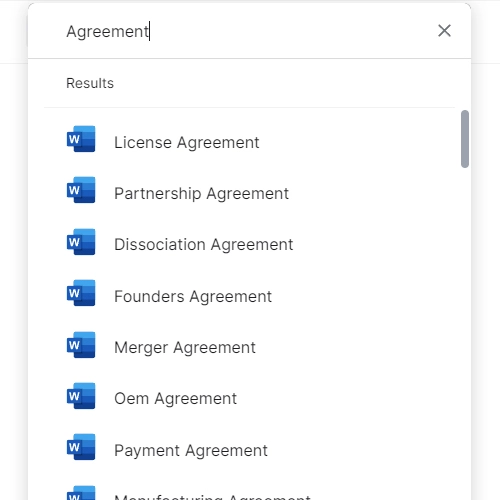

- Acceptable Use Policy (AUP)

- A written agreement or policy document that defines what an employee or user may and may not do with a specific technology, system, or tool.

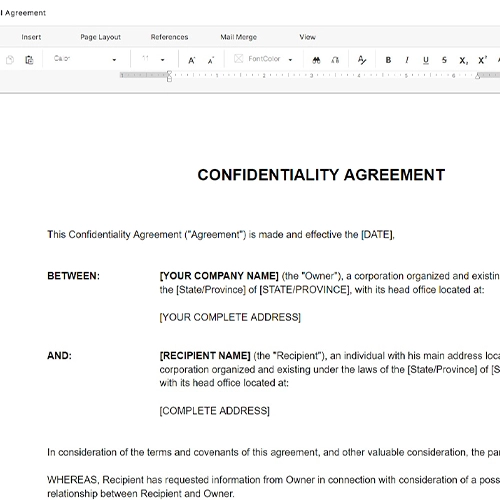

- Confidential Information

- Non-public business data including trade secrets, client records, financial data, and proprietary processes that must not be entered into external AI systems.

- AI-Generated Output

- Text, code, summaries, or other content produced by a generative AI tool in response to a user prompt, which may require human review before use.

- Hallucination

- A generative AI error in which the system produces plausible-sounding but factually incorrect or fabricated information as if it were accurate.

- Prompt

- The instruction or input text a user submits to an AI tool like ChatGPT to initiate a response or generate content.

- Data Residency

- The geographic location where data submitted to an AI system is stored or processed — a critical consideration for cross-border data privacy compliance.

- Intellectual Property (IP) Ownership

- The legal rights to original works or inventions; with AI-generated content, ownership is contested and varies by jurisdiction, making policy documentation critical.

- Human Oversight Requirement

- A policy obligation requiring a qualified person to review, verify, and take responsibility for any AI-generated output before it is used, published, or shared externally.

- Data Classification

- A system for categorizing organizational data by sensitivity level — typically public, internal, confidential, and restricted — to determine which data may be shared with external tools.

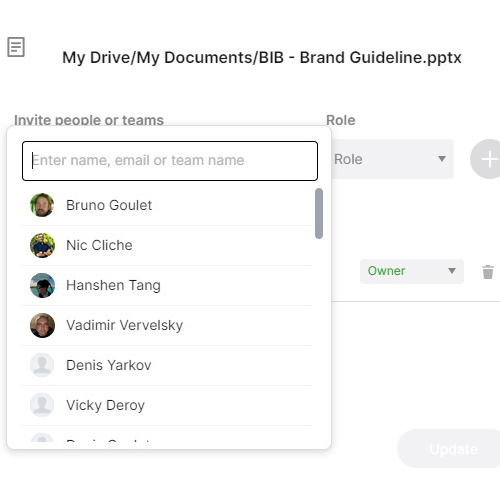

- Third-Party AI Provider

- An external company such as OpenAI that operates the AI system being used, whose own terms of service and privacy policy govern how submitted data is handled.