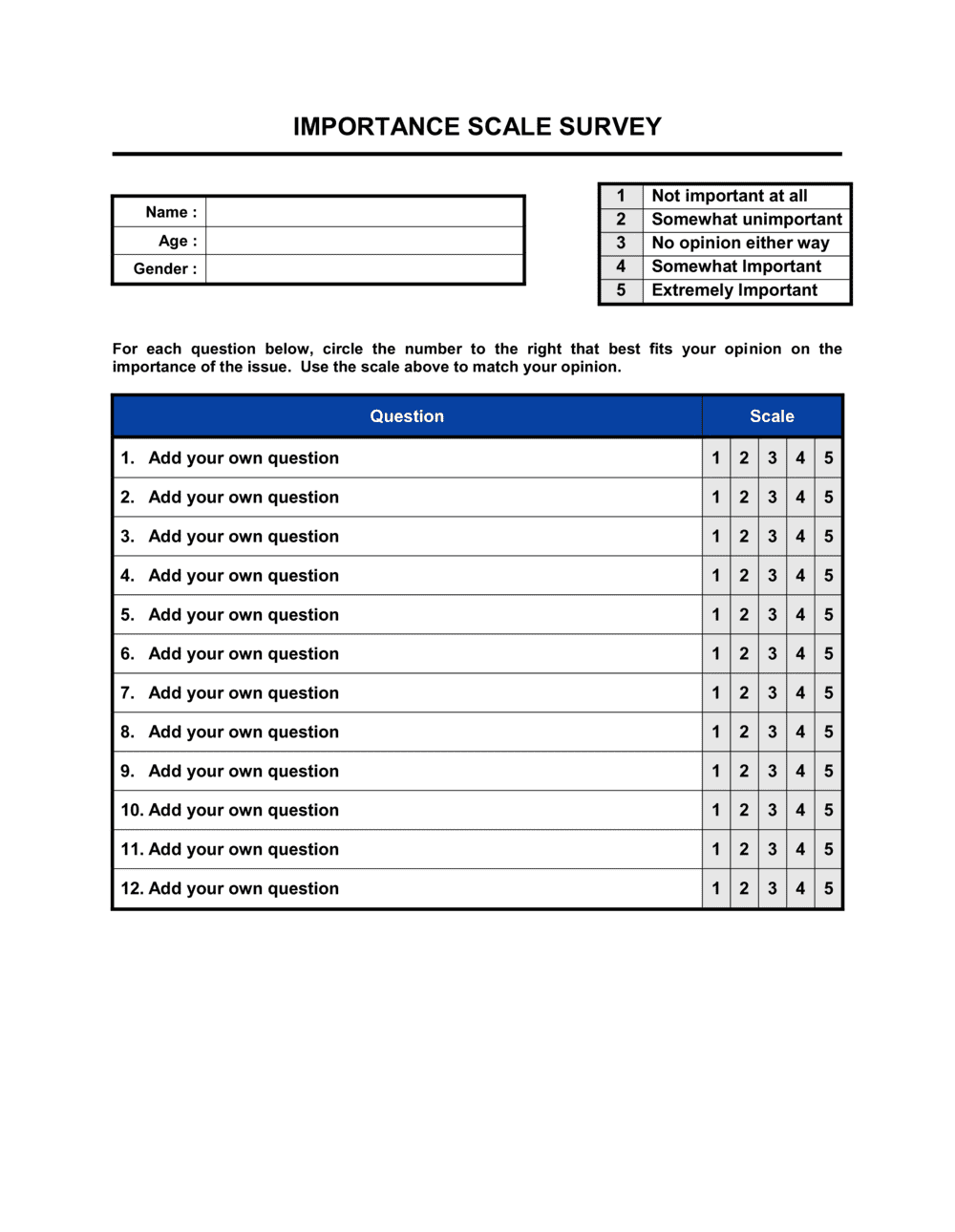

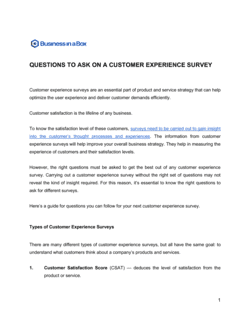

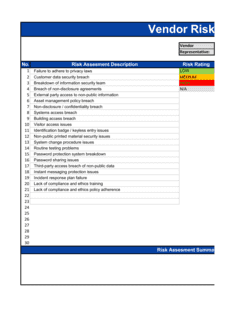

- Importance Scale

- A numeric or descriptive rating system — such as 1 (not important) to 5 (extremely important) — used to quantify how much a respondent values a given attribute.

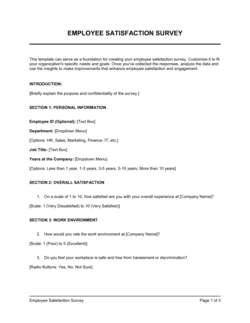

- Likert Scale

- A symmetric agree–disagree or importance rating scale, typically with 5 or 7 response options, used to measure attitudes or priorities in survey research.

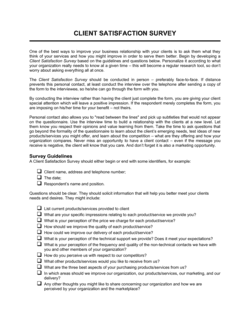

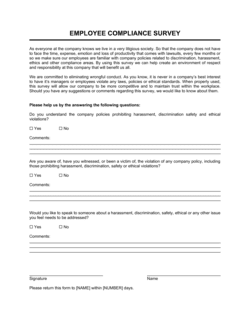

- Respondent Consent

- A documented acknowledgment by the survey participant confirming they understand how their responses will be collected, stored, and used.

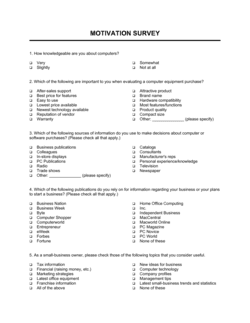

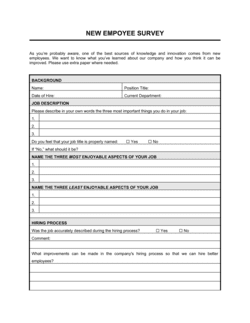

- Attribute

- A specific feature, criterion, or factor being rated in the survey — for example, 'price,' 'delivery speed,' or 'technical support quality.'

- Weighted Average

- A calculation that assigns different levels of significance to each rating, allowing higher-priority respondent groups to influence aggregate scores proportionally.

- Data Controller

- The organization or individual that determines the purposes and means of processing personal data collected through the survey, as defined under GDPR and similar privacy frameworks.

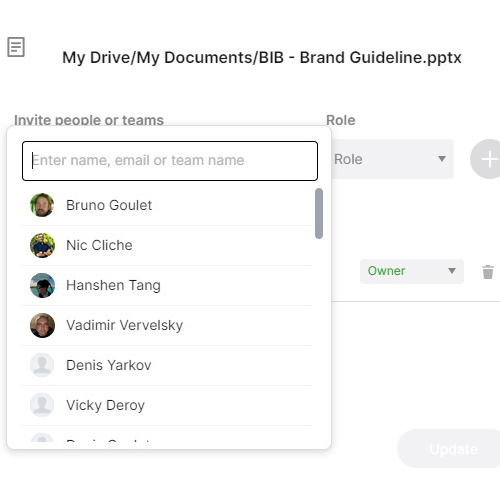

- Anonymization

- The process of removing or masking identifying information from survey responses so individual respondents cannot be identified from the data.

- Response Bias

- A systematic distortion in survey results caused by respondents answering in ways they believe are expected or socially desirable rather than accurately.

- Informed Participation

- The principle that respondents must understand the purpose of the survey, how data will be used, and any consequences of participation before completing it.

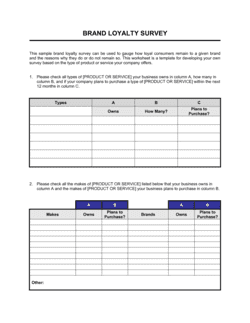

- Rating Matrix

- A table format in which rows list attributes and columns represent scale values, allowing respondents to rate multiple items consistently in a single view.

- Ordinal Data

- Survey data where responses indicate rank order — such as importance ratings — but the intervals between values are not necessarily equal.