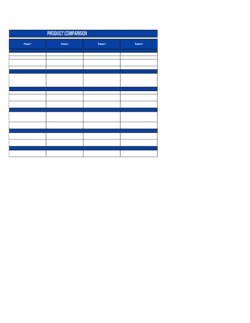

- Quality Benchmark

- A defined standard or reference point against which a product, service, or supplier is measured during evaluation.

- Weighted Scoring

- An evaluation method that assigns different levels of importance to each criterion, so higher-priority factors contribute more to the final score.

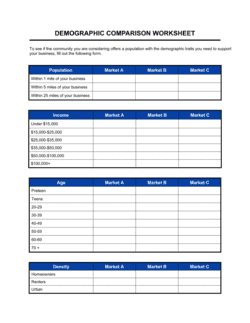

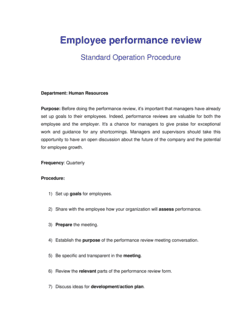

- Evaluation Criteria

- The specific attributes — such as defect rate, delivery reliability, or compliance with specifications — used to judge quality.

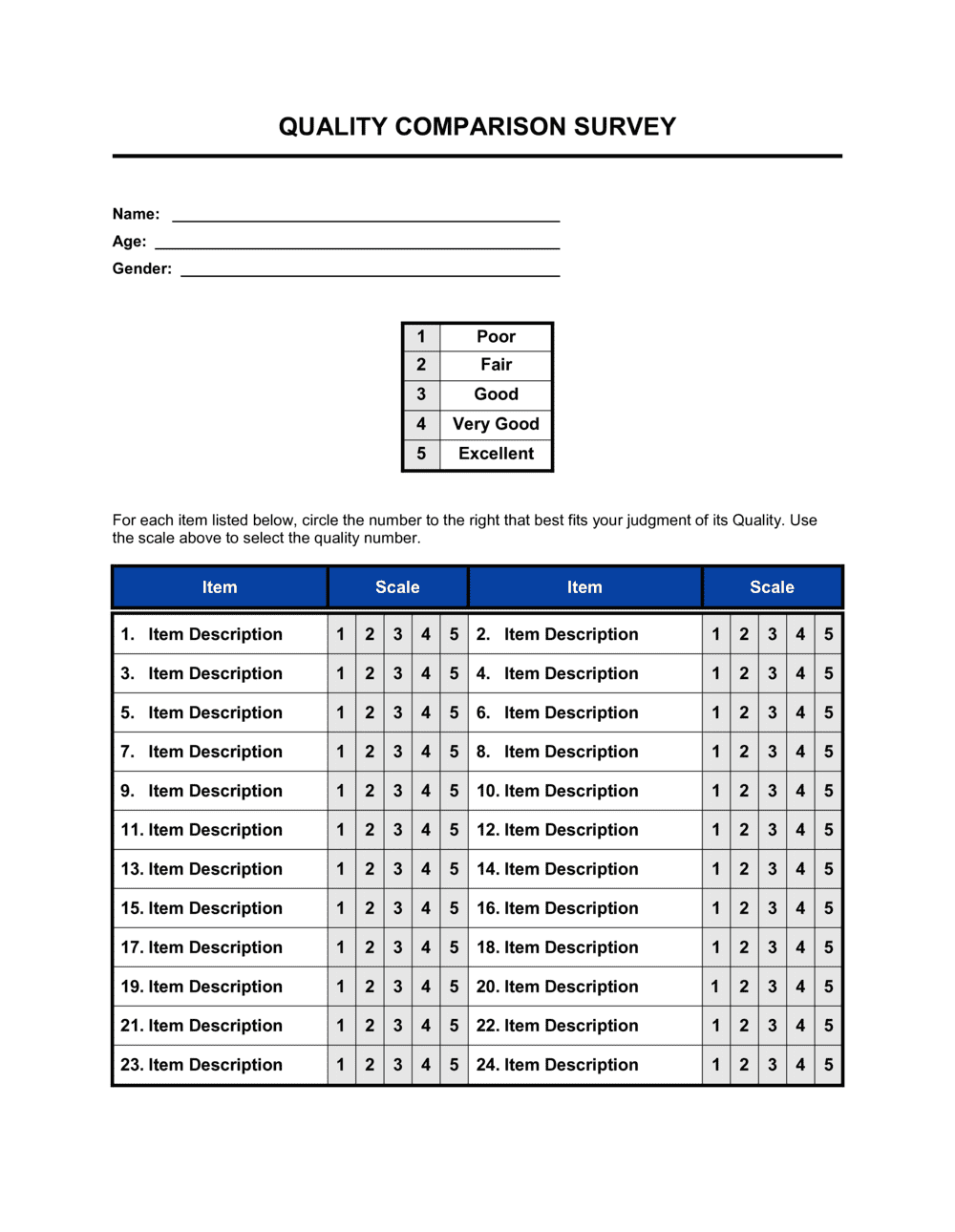

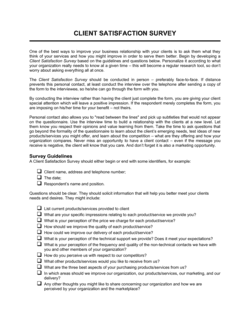

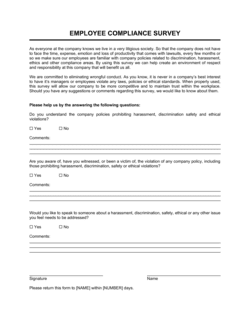

- Respondent

- The individual or organization completing the survey and providing quality assessments based on direct knowledge or experience.

- Likert Scale

- A rating scale, typically 1–5 or 1–7, used to measure the degree to which an evaluator agrees with or rates a quality statement.

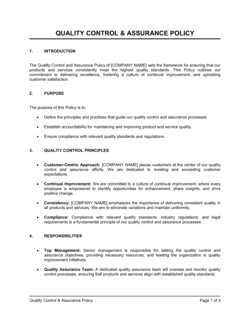

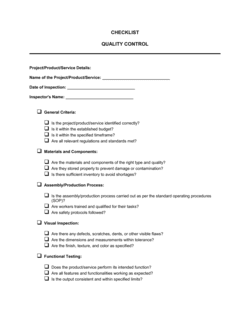

- Non-Conformance

- A documented instance where a product, service, or process fails to meet a specified quality requirement or standard.

- ISO 9001

- An internationally recognized standard for quality management systems, requiring documented processes, measurable objectives, and continual improvement.

- Corrective Action

- A documented step taken to eliminate the root cause of a detected non-conformance and prevent its recurrence.

- Supplier Scorecard

- A summary output of a quality evaluation that aggregates individual criterion scores into an overall performance rating for a vendor.

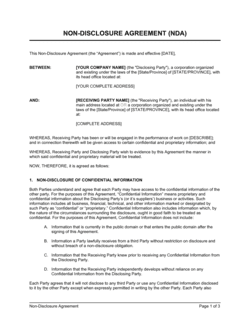

- Audit Trail

- A chronological record of evaluation activities, signatures, and findings that can be reviewed by an auditor or used in a dispute.

- Bias Disclosure

- A declaration by the evaluator of any financial, personal, or commercial relationships with the entities being compared that could influence their ratings.