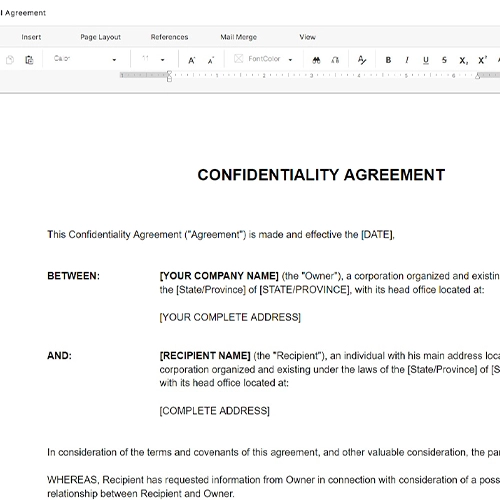

- Acceptable Use Policy (AUP)

- A written set of rules specifying how users may and may not use a platform, network, or service — forming the behavioral backbone of a chat room agreement.

- User-Generated Content (UGC)

- Any text, images, links, or media that a user posts or transmits through the platform, as distinct from content the operator itself publishes.

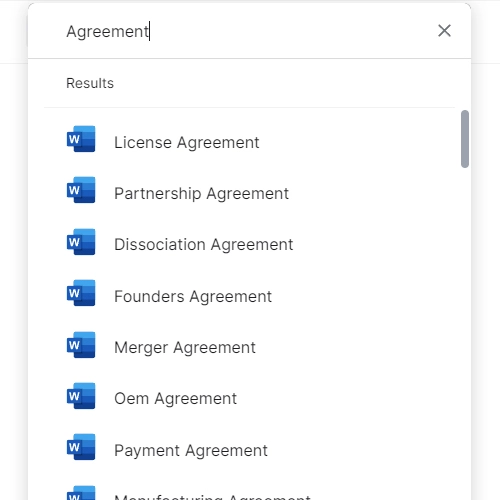

- Content License

- The grant of rights from a user to the platform operator allowing the operator to store, display, moderate, or remove content submitted through the chat.

- Moderation

- The process of reviewing, filtering, editing, or removing user content and suspending or banning accounts that violate the platform's conduct rules.

- Limitation of Liability

- A clause capping the operator's financial exposure to users — typically limiting damages to fees paid or a fixed dollar amount — for claims arising from platform use.

- Safe Harbor (Section 230)

- A US legal protection under 47 U.S.C. § 230 that generally shields interactive computer service providers from liability for third-party content posted on their platforms.

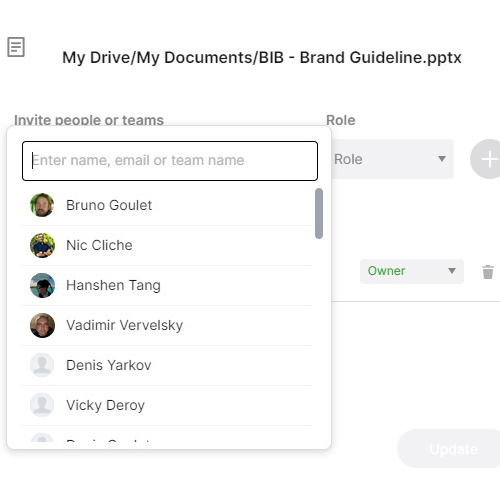

- Account Suspension

- Temporary restriction of a user's access to the chat platform, typically triggered by a conduct violation pending investigation or as a graduated enforcement step.

- Termination for Cause

- Permanent removal of a user's account due to a serious or repeated violation of the chat room agreement, without obligation to provide a refund or continued access.

- Indemnification

- A contractual obligation requiring the user to compensate the platform operator for any losses, legal costs, or claims arising from the user's conduct or content.

- Governing Law

- The jurisdiction whose laws will be used to interpret and enforce the agreement, typically the state or country where the platform operator is incorporated.

- COPPA

- The Children's Online Privacy Protection Act — a US federal law requiring verifiable parental consent before collecting personal data from children under 13.

- GDPR

- The EU General Data Protection Regulation — a comprehensive data privacy framework requiring lawful basis for processing personal data of EU residents, with significant penalties for non-compliance.